Handy Links

SLAC News Center

SLAC Today

- Subscribe

- Archives: Feb 2006-May 20, 2011

- Archives: May 23, 2011 and later

- Submit Feedback or Story Ideas

- About SLAC Today

SLAC News

Lab News

- Interactions

- Lightsources.org

- ILC NewsLine

- Int'l Science Grid This Week

- Fermilab Today

- Berkeley Lab News

- @brookhaven TODAY

- DOE Pulse

- CERN Courier

- DESY inForm

- US / LHC

SLAC Links

- Emergency

- Safety

- Policy Repository

- Site Entry Form

- Site Maps

- M & O Review

- Computing Status & Calendar

- SLAC Colloquium

- SLACspeak

- SLACspace

- SLAC Logo

- Café Menu

- Flea Market

- Web E-mail

- Marguerite Shuttle

- Discount Commuter Passes

-

Award Reporting Form

- SPIRES

- SciDoc

- Activity Groups

- Library

Stanford

Around the Bay

LSST Makes a Strong Showing at AAS

SLACers of many stripes attended the 217th meeting of the American Astronomical Society in Seattle last week, and one of the big draws was the Large Synoptic Survey Telescope. The giant telescope enjoyed increased visibility at the conference, due in large part to its lead position among ground-based observatories in the Astronomy and Astrophysics Decadal Survey, also known as Astro2010, released last August.

Kirk Gilmore, with the joint SLAC-Stanford Kavli Institute for Particle Astrophysics and Cosmology, presented a paper on one of the LSST's biggest attention grabbers—its camera. Gilmore is a member of the SLAC team leading the design of the camera, which, when constructed, will be the largest digital camera in the world at more than nine feet long and four and a half feet in diameter and weighing more than 6600 pounds. Gilmore discussed progress on the camera design, as well as some of the unique challenges facing the design team, such as filter coatings that must be applied with a high degree of accuracy and detector chips that must be fabricated with surface imperfections measured in microns or less.

"There are a lot of activities taking place to reduce the high risk inherent in certain components of the camera," Gilmore said, but he expressed confidence in the leadership of KIPAC scientist Steven Kahn and the expertise of SLAC project manager Nadine Kurita. In turn, AAS attendees greeted his presentation with enthusiasm.

"The important thing about the AAS meeting is that it's attended by several thousand people in the astronomical community," Gilmore explained, "and the LSST as a whole was very well-received."

Challenges also await the LSST data management team, charged with managing the vast amounts of data that will be generated by a telescope that can survey the entire visible sky twice a week in unprecedented detail. To help prepare, the team is putting LSST scientific analysis tools through their paces using a simulated data set.

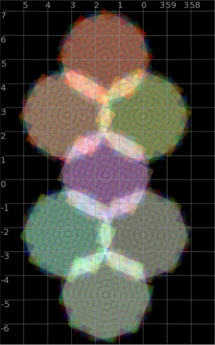

SLAC computing resources were among those used to create a highly-detailed simulation of the observatory and camera, which then generated more than 800 simulated examples of the 3,200-megapixel images that will come from the actual camera. These example images can be processed with prototype LSST data management and analysis tools just as the real images will be, but long before the real images are available. LSST data management team members and others from SLAC helped deliver this package of simulated images and prototype tools to the LSST collaboration gathered in Seattle.

"This is the first time all these pieces have come together," said Gregory Dubois-Felsmann, system architect for the LSST Data Management System.

Simulating data by definition includes simulating how the information being captured is affected by the environment, including the atmosphere, telescope and camera. For example, KIPAC student member Chihway Chang presented a paper discussing possible effects of atmospheric blurring, and the LSST instrumentation itself, on results of studies involving weak gravitational lensing. In weak lensing, the shapes of distant galaxies are subtly distorted by gravity as their light passes through concentrations of dark matter during the billions-of-light years trip to the LSST detectors. If this effect can be measured accurately, it will provide key information on the nature of the mysterious dark energy that appears to be pulling the universe apart. To pull the information from the data, however, physicists need to determine as precisely as possible what the environmental effects on the data will be.

"These are very subtle measurements," said Kahn.

Weak lensing is only one example of the many delicate but data-intensive uses of the LSST. Though first light for the giant telescope is still years away, as the many presentations at the AAS meeting demonstrate, the process of learning how to learn from the flood of data the LSST promises to deliver is already well under way.

—by Lori Ann White

SLAC Today, January 18, 2011